The AI Audit Trail

Why basic logging isn't enough for agentic infrastructure and the critical importance of context-aware audit trails.

Welcome to Day 11 of #30DaysOfTrust.

The AI Audit Trail 🔍📜

We are moving rapidly from conversational AI to agentic AI. Instead of just answering questions, autonomous agents are taking actions—calling APIs, executing code, updating databases, and managing files.

This leap in capability is incredible for productivity, but it introduces a massive visibility problem for enterprise security and SOC teams.

Imagine this scenario: A user asks their AI assistant to "clean up old drafts" in a cloud storage folder. The agent hallucinates or misinterprets the instruction and deletes a highly sensitive, active contract.

When the SOC team gets the alert and checks the logs, what do they see? Usually, just a timestamp and a note that the application's overarching Service Account or OAuth token executed a DELETE request.

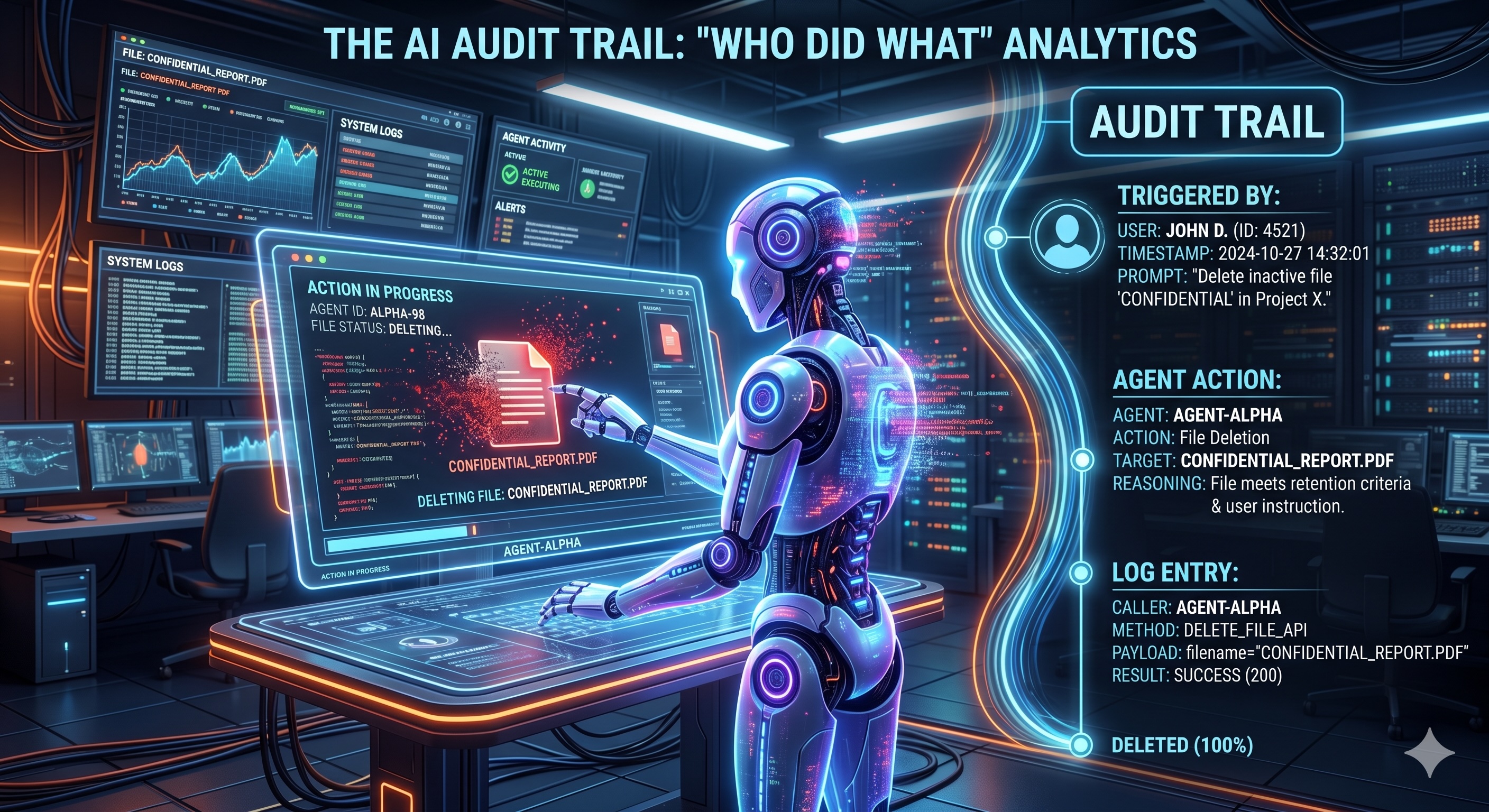

The logs don't show the user's prompt. They don't show the agent's internal reasoning. They don't show the specific tool-call context. The "Who Did What" is completely lost in the abstraction layer.

Today, we are looking at the critical importance of the AI Audit Trail and why basic logging isn't enough for agentic infrastructure.

The Problem with "God Mode" Logging ⚡

Most AI applications today are built with broad, shared permissions. When an agent acts, it acts on behalf of the application, not necessarily the specific user session. If a malicious actor executes a prompt injection attack to exfiltrate data, the standard infrastructure logs will show authorized, seemingly normal API traffic.

For a SOC analyst trying to establish the Mean Time to Resolution (MTTR) for an incident, this lack of context is a nightmare. You cannot secure what you cannot see, and you cannot audit what you cannot trace.

The 3 Pillars of an Actionable AI Audit Trail 🏗️

To build true trust in autonomous meta-agents and digital workforces, we need an Agent Access Security Broker (AASB) layer that enforces comprehensive, context-aware logging. An effective AI audit trail must include:

- 🆔 Identity and Context Binding: Every action an agent takes must be cryptographically tied back to the originating user, the specific agent session, and the exact prompt that triggered the chain of events. A log entry shouldn't just say

Action: Delete_File. It needs to sayUser: John Doe -> Prompt: "Remove drafts" -> Agent: Workspace_Assistant -> Tool: Drive_API -> Action: Delete_File. - 📊 Granular Tool-Call Analytics: Visibility must happen at the tool-execution level. Before a payload is sent to an external SaaS app or internal database, the gateway must log the exact parameters the LLM generated. This allows security teams to detect anomalies—like an agent suddenly attempting to loop through and delete hundreds of records—in real-time.

- 📜 Immutable Policy Evaluation Logs: It is not enough to know what the agent did; you need to know why the system allowed it. If you are using Policy-as-Code to govern your agents, the audit trail must record the policy evaluation state at the time of the action.

Analytics for Trust, Not Just Compliance 🤝

An AI audit trail isn't just about passing a SOC 2 audit—it's an operational necessity.

For developers, it drastically reduces debugging time when complex agent orchestration (like LangGraph workflows) goes off the rails. For SOC teams, it provides the deterministic evidence needed to investigate anomalies without shutting down the entire AI infrastructure.

Ultimately, trust in AI cannot be assumed; it must be proven. And proof requires an unbreakable, transparent trail of exactly who did what.

#30DaysOfTrust #AgenticAI #AASB #CyberSecurity #AuditTrail #SecuriX #BuildInPublic #AIInfrastructure

Spread the word

Join the Agentic Revolution.

Build secure AI agents with the first-ever Agent Access Security Broker (AASB).

Start BuildingCommunity Forum

Questions, Feedback & Discussions

Join the conversation

Recent Discussions 0 Comments

No questions yet. Be the first!