How to Secure Shadow AI Without Killing Innovation

Why blocking AI isn't the answer and how to use LLM Gateways and Secure MCP to bring Shadow AI into a secure framework.

Welcome to Day 15 of #30DaysOfTrust.

Yesterday, we shined a light on Shadow AI—the growing trend of employees quietly using unauthorized AI tools to get their work done, often putting sensitive company data at risk. It’s a scary thought, but blocking AI entirely isn’t the answer. If you block it, your team loses a massive productivity boost.

So, how do we secure it?

A Lesson from the Past: From CASB to AASB 🛡️🌉

To understand the solution, let’s take a quick trip down memory lane. When cloud apps (like Dropbox or Google Drive) first arrived, employees started using them without IT's permission—creating "Shadow IT." To secure this, the industry built CASBs (Cloud Access Security Brokers).

Think of a CASB as a highly intelligent bouncer for the cloud. It sat between employees and the internet, using tools like Forward Proxies, Reverse Proxies, and APIs to ensure data didn't leak. Today, AI requires a similar, but upgraded, kind of bouncer. Enter the AASB (AI/Agent Access Security Broker).

Because AI doesn't just store data—it reads, processes, and generates it—we need specific checkpoints to keep everything safe.

The Two Pillars of AI Security 🏛️

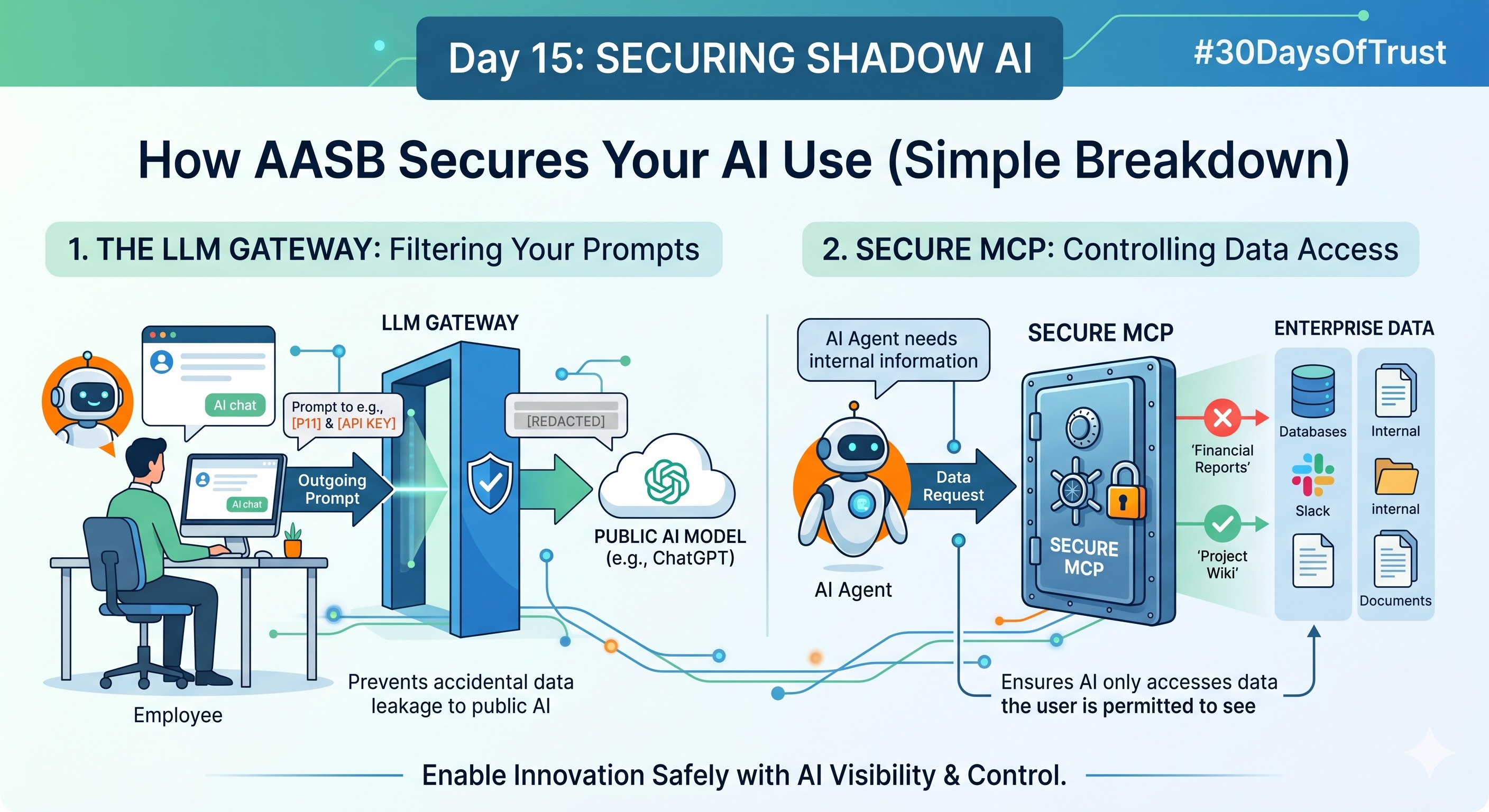

An AASB secures your AI environment through two core mechanisms:

1. The LLM Gateway: The Traffic Cop (Agent ↔ AI Model) 🚦🤖

When an employee (or an AI agent acting on their behalf) asks a public AI model a question, you need to make sure they aren't accidentally pasting in confidential source code or customer PII.

The LLM Gateway sits right between the user and the AI model (like ChatGPT or Claude). It acts as a real-time translator and filter. Before the prompt ever reaches the AI, the Gateway scans it. If it detects sensitive data, it can block the request, or better yet, automatically mask the sensitive info (turning a real name into [USER_NAME]) so the employee still gets their answer without leaking company secrets.

2. Secure MCP: The Vault Guard (Agent ↔ Enterprise Data) 🔐🗄️

AI is most powerful when it can read your internal company data to give you specific, relevant answers. The Model Context Protocol (MCP) is the technology that connects AI agents to your databases, Slack channels, and internal wikis.

But you don’t want an intern’s AI assistant having access to the CEO’s private financial folders. That’s why we need a Secure MCP. It sits between the AI Agent and your Enterprise Data, checking the user's "ID card" (access privileges). It ensures the AI only retrieves and reads data that the specific employee is explicitly allowed to see.

The Bottom Line 🎯

Securing Shadow AI isn't about saying "no" to your employees. It’s about building safe highways so they can drive fast without flying off a cliff. By implementing an LLM Gateway to filter what goes out, and a Secure MCP to control what internal data the AI can see, you can confidently turn Shadow AI into a trusted, powerful business advantage.

#30DaysOfTrust #ShadowAI #AASB #LLMGateway #SecureMCP #DataSecurity #SecuriX #BuildInPublic #AIInfrastructure

Spread the word

Join the Agentic Revolution.

Build secure AI agents with the first-ever Agent Access Security Broker (AASB).

Start BuildingCommunity Forum

Questions, Feedback & Discussions

Join the conversation

Recent Discussions 0 Comments

No questions yet. Be the first!