Understanding the 'Shadow AI' Problem

Why the lack of AI oversight is a ticking time bomb for enterprise security and how to bring AI into a secure framework.

Welcome to Day 14 of #30DaysOfTrust.

The Stealthy Rise of Shadow AI 🕵️♂️🌑

Imagine this: One of your best developers is stuck on a complex piece of code. To speed things up, they copy the code block and paste it into a public, free-tier AI chatbot, asking it to find the bug. The chatbot fixes the bug in seconds. The developer is a hero, shipping the feature ahead of schedule.

A win for productivity, right?

Not quite. What that developer just did was feed proprietary company code into a public AI model, potentially exposing intellectual property to the world. This is the reality of Shadow AI.

What is Shadow AI? 🌓

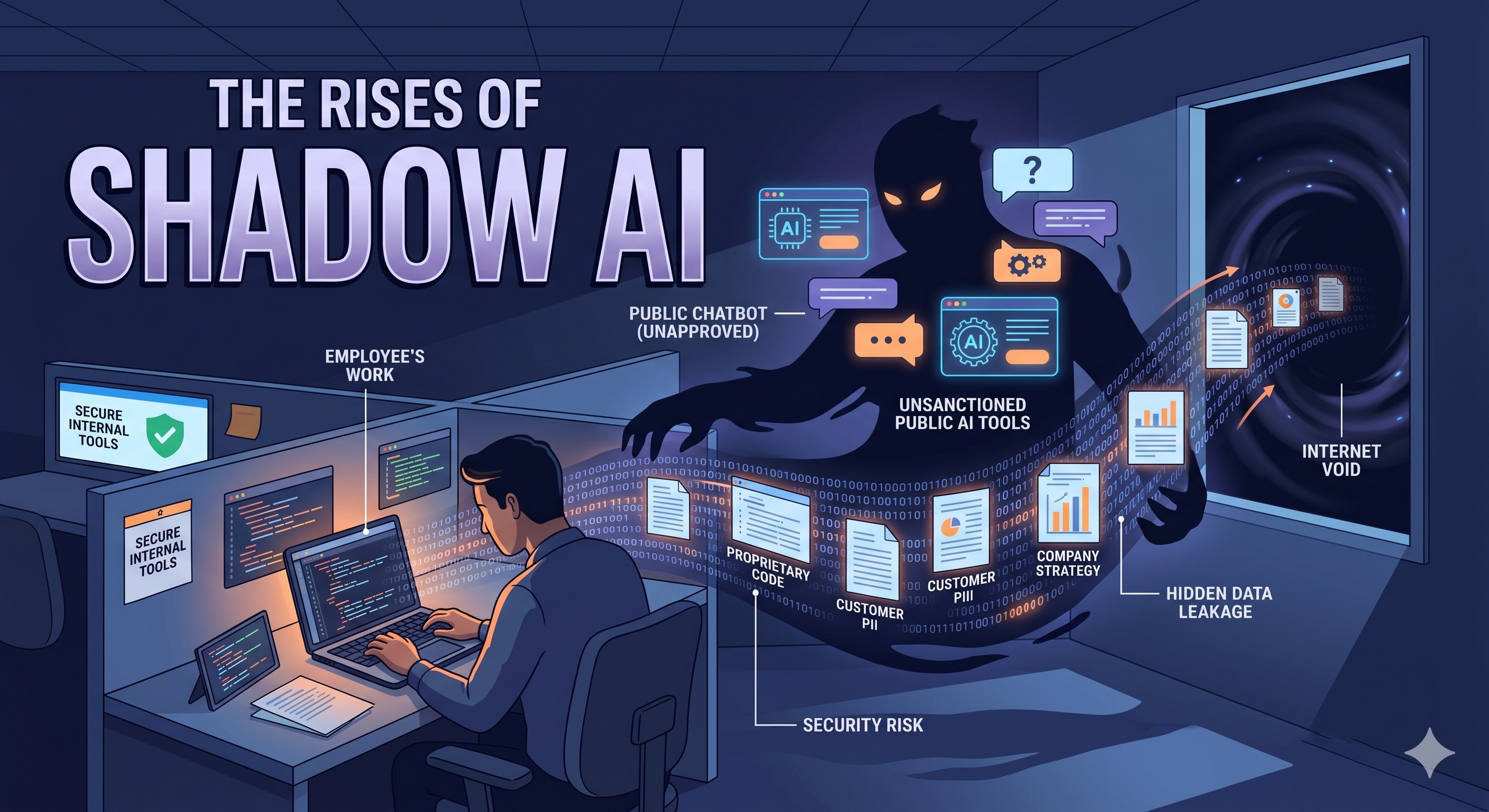

Shadow AI happens when employees use unapproved, outside artificial intelligence tools to do their daily work without the knowledge or oversight of the IT or security teams.

It’s the modern-day equivalent of "Shadow IT" (like when employees used personal Dropbox accounts for company files a decade ago), but with much higher stakes. Employees aren't acting maliciously; they are simply trying to be more efficient. They use public AI tools to summarize meeting notes, draft emails, analyze customer data, or write code.

The Hidden Dangers: Why SOC Teams Are Losing Sleep 🛡️🛰️

The problem isn’t the AI itself; it’s the lack of boundaries. When enterprise data leaves your controlled environment and enters a public AI model, you lose control of it. Here is why Shadow AI is a top priority for security teams:

- 🔓 Data Leaks & IP Loss: Pasting customer PII, financial data, or source code into a public chatbot means that data could be used to train the next version of that model. Your company secrets could literally become the answer to someone else's prompt.

- ⚖️ Compliance Nightmares: If your business is bound by GDPR, HIPAA, or SOC2, uploading regulated data to unsanctioned tools is a direct violation, risking massive fines.

- 🙈 Loss of Visibility: You can't protect what you can't see. If IT doesn't know which tools are being used, they can't assess the security posture of those vendors.

Smarter AI Doesn't Automatically Mean Safer Data 💡🛑

The instinct is often to ban these tools completely. But blocking AI is like trying to hold back the tide—employees will just find workarounds. The solution isn't prohibition; it's secure enablement.

Enterprises need infrastructure that allows teams to harness the power of AI while enforcing strict data boundaries. This is where the concept of an Agent Access Security Broker (AASB) becomes critical.

Bringing AI Out of the Shadows ☀️

By sitting between the user (or the internal AI agent) and the external model, an AASB allows you to:

- ✂️ Automatic Redaction: Scrub personal identifiers and sensitive data before it ever reaches a public model.

- 📋 Policy Enforcement: Dynamically enforce access policies based on the user's role and the data's sensitivity.

- 🕵️ Unified Auditing: Maintain a clear trail of every prompt and tool usage before anyone hits "send."

Innovation shouldn't require sacrificing security. It’s time to bring AI out of the shadows and into a secure framework.

#30DaysOfTrust #ShadowAI #DataSecurity #AASB #EnterpriseSecurity #SecuriX #BuildInPublic #AIInfrastructure

Spread the word

Join the Agentic Revolution.

Build secure AI agents with the first-ever Agent Access Security Broker (AASB).

Start BuildingCommunity Forum

Questions, Feedback & Discussions

Join the conversation

Recent Discussions 0 Comments

No questions yet. Be the first!