How to Set Unbreakable Boundaries for AI Agents

Discover the Policy Enforcement Layer—the brains behind AI security that ensures agents stay within their limits using OPA and Rego.

Welcome to Day 17 of #30DaysOfTrust.

Yesterday, we turned on "The Radar" to discover the Shadow AI lurking in our networks. Now that we can see the AI agents operating in our environment, we face the next big hurdle: How do we actually control what they do?

If an AI agent is connected to your company’s databases or a user's private files, you can't just cross your fingers and hope it doesn't do something wrong. You need to establish absolute, unbreakable boundaries.

You need a Policy Enforcement Layer.

The Brains Behind the Bouncer 🧠🛡️

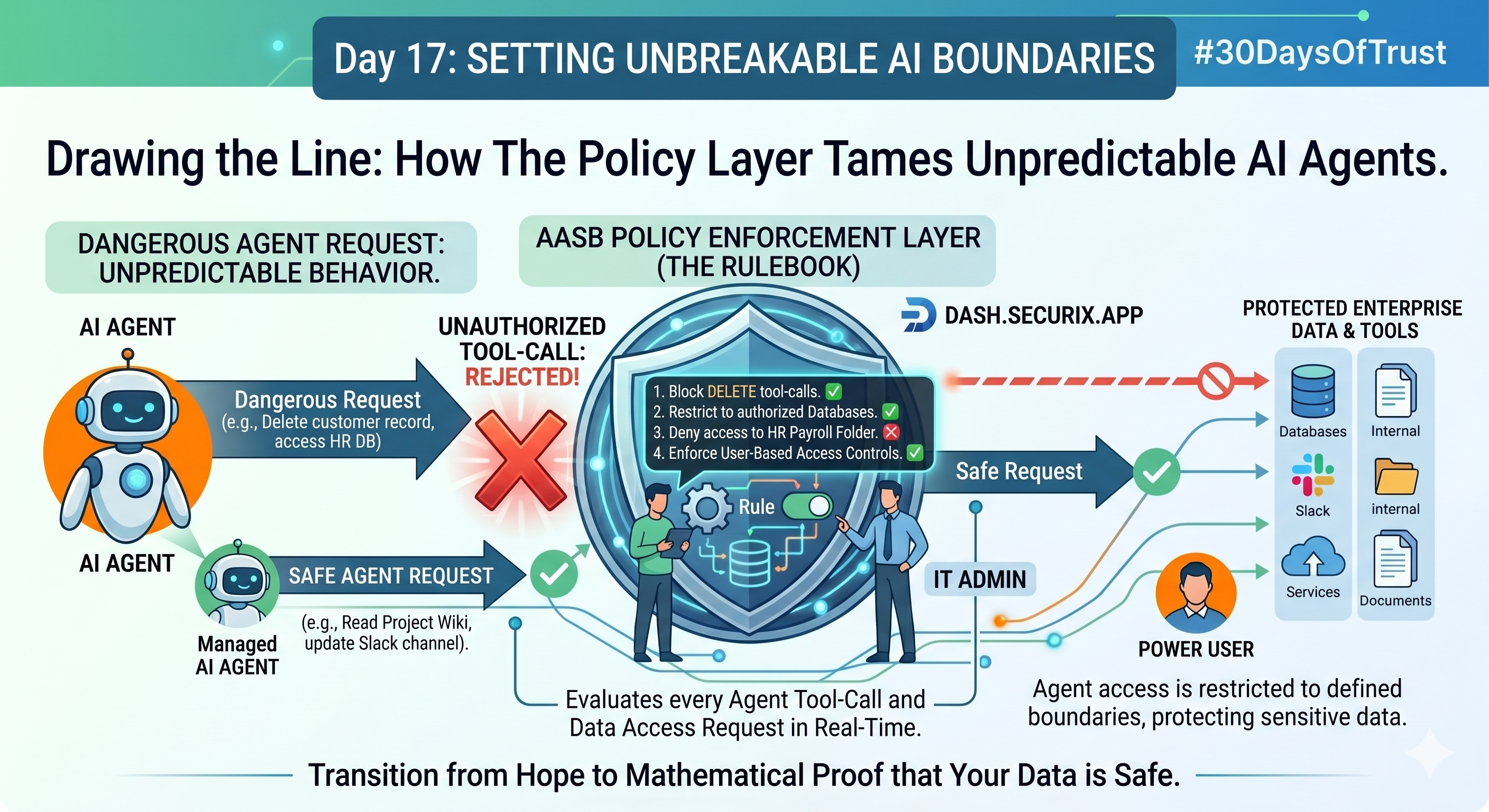

If the Secure MCP (from Day 15) is the vault guard, the Policy Layer is the rulebook that the guard memorizes. It sits directly between the AI Agent and your data, evaluating every single tool-call and data request in milliseconds.

Instead of relying on clunky, hard-coded restrictions, modern AI security requires fine-grained, infrastructure-level policies (often written in robust languages like Rego using the Open Policy Agent (OPA) framework) to make dynamic decisions.

Empowering Your Teams 🕹️🏢

Here is how this Policy Layer empowers different groups across the organization:

- 💻 For the Developers Building AI: When developers build AI agents, they face a massive liability: What if my agent hallucinates and deletes customer data? A strong policy layer allows developers to establish rigid, code-level boundaries. Even if the AI model goes completely off the rails and tries to execute a destructive command, the policy layer acts as an impenetrable wall, instantly rejecting the unauthorized tool-call before it reaches the data.

- 🔑 For the Enterprise IT Admins: IT admins need centralized control to protect company secrets. With a unified policy dashboard, an admin can declare, "The Marketing team's AI agents can read campaign metrics, but they are strictly forbidden from accessing the HR payroll database." The policy is enforced across the entire organization, regardless of which specific AI tool the employee is using.

- ⚡ For the Solo Enthusiast: Even solo enthusiasts connecting AI to their personal Notion workspaces or private GitHub repos need control. A simple policy layer allows them to tell their personal AI assistant, "You can read my notes, but you cannot edit or share them."

The Bottom Line 🎯

AI is a powerful engine, but a powerful engine needs reliable brakes. By implementing a strict policy layer over your agent's tool-calls, you eliminate the anxiety of unpredictable AI behavior. You transition from hoping your data is safe, to mathematically proving that it is.

#30DaysOfTrust #AIPolicy #OPA #Rego #AgentSecurity #DataGovernance #SecuriX #BuildInPublic #AIInfrastructure

Spread the word

Join the Agentic Revolution.

Build secure AI agents with the first-ever Agent Access Security Broker (AASB).

Start BuildingCommunity Forum

Questions, Feedback & Discussions

Join the conversation

Recent Discussions 0 Comments

No questions yet. Be the first!