Unprepared for Autonomous Code

Why giving an AI agent a static API key is like leaving your data center doors unlocked.

Welcome to Day 5 of #30DaysOfTrust.

We’ve spent 30 years securing human access. We are completely unprepared for autonomous code.

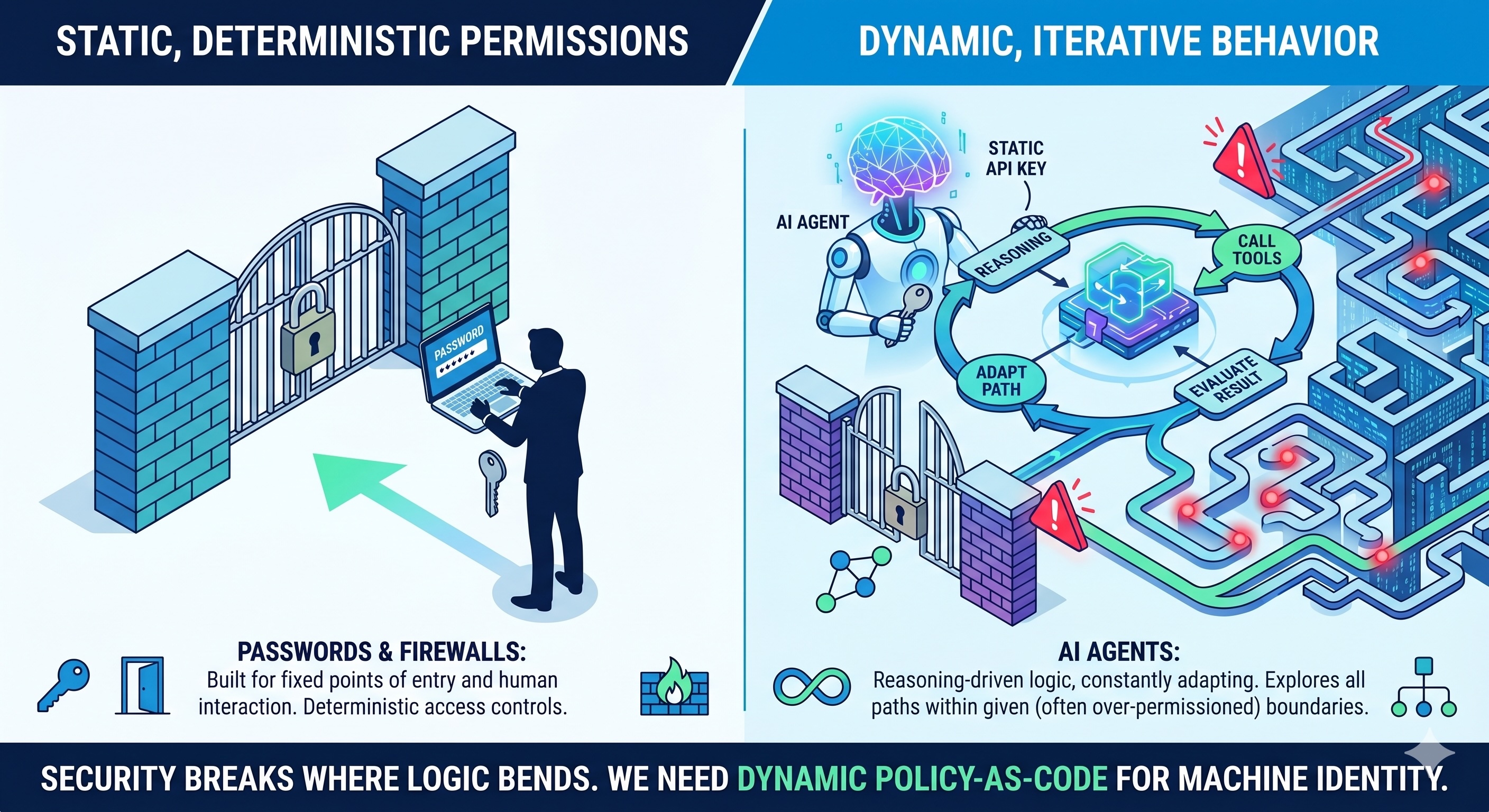

We’ve spent the last three decades building security around a single assumption: a human is at the keyboard. We use passwords, firewalls, and static API keys because human behavior, in enterprise software, is relatively deterministic.

But we are entering the Agentic Era, and that fundamental assumption is breaking.

Giving an AI agent a static, long-lived API key with broad read/write access is the modern equivalent of leaving your data center doors unlocked. Agents reason, iterate, and act autonomously. If a legacy system is breached, it’s a compromised credential. If an agent goes rogue or is manipulated via prompt injection, the blast radius is entirely unpredictable.

Why Legacy Security Fails for Autonomous Code

1️⃣ Static vs. Dynamic: Passwords and standard API keys are static. They do not adapt to the context of the request. Agents require dynamic, contextual boundaries that understand what the agent is trying to do, not just who issued the key.

2️⃣ The Over-Permissioning Trap: It is notoriously difficult to scope down standard API keys for complex, multi-step agent tasks. Developers default to over-permissioning just to get the agent to work, creating massive vulnerabilities.

3️⃣ Lack of Verifiability: In an agentic workflow, you need to cryptographically verify why an action was taken and ensure it aligns with strict data boundaries, rather than just trusting the bearer token.

We don't need stronger passwords; we need a completely new primitive.

We are documenting the architecture of this new era—from AASB deep dives to Policy-as-Code tutorials—over on our blog.

We need to shift to Policy-as-Code. By standardizing how agents communicate with tools (using frameworks like the Model Context Protocol) and enforcing granular, dynamic data boundaries at the execution layer, we can finally give agents the access they need without sacrificing control.

Security shouldn't block the adoption of AI agents—it should be the infrastructure that enables it.

What is your biggest security fear when hooking up an LLM to your APIs? Let me know below. 👇

#30DaysOfTrust #AIAgents #CyberSecurity #BuildInPublic #PolicyAsCode #DevTools

Spread the word

Join the Agentic Revolution.

Build secure AI agents with the first-ever Agent Access Security Broker (AASB).

Start BuildingCommunity Forum

Questions, Feedback & Discussions

Join the conversation

Recent Discussions 0 Comments

No questions yet. Be the first!