Models, Agents, and Skills — The New Architecture of Compute

Understand the new architecture of compute—Models, Agents, Tools, and Skills—and how they form the ultimate trust boundary in autonomous AI.

Welcome to Day 22 of our #30DaysOfTrust Challenge!

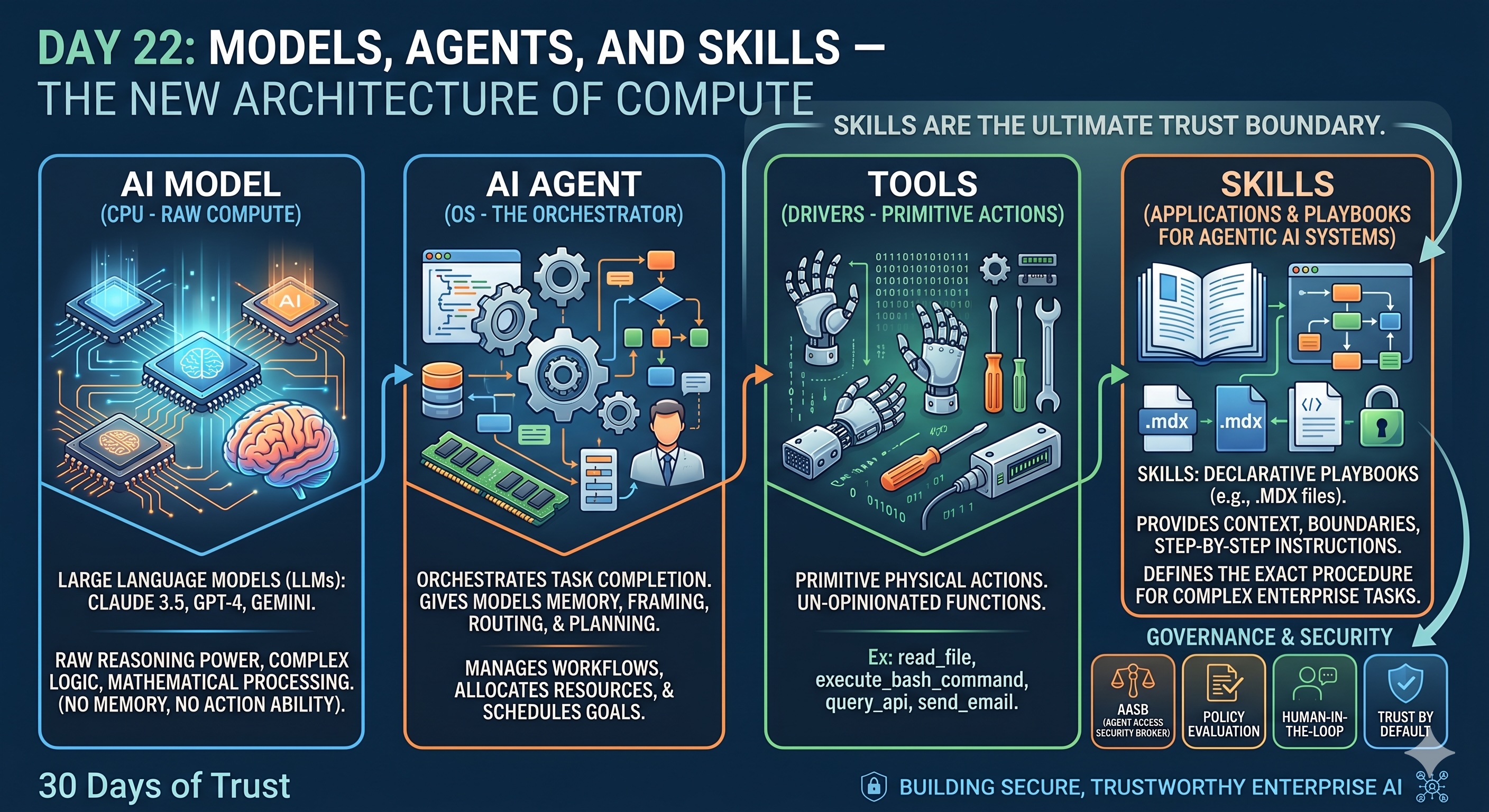

As we move deeper into the era of autonomous AI, the terminology is getting crowded. We hear words like "Foundation Models," "Agents," "Tools," and "Skills" thrown around interchangeably. But to build secure, trustworthy enterprise systems, developers and leaders must understand exactly how these pieces fit together.

To make sense of this, researchers and engineers at the bleeding edge of AI have popularized a brilliant mental model that maps the new world of AI directly onto the traditional architecture of a computer.

If we want to build "Trust by Default" into our B2B infrastructure, we first have to understand what we are actually trusting. Let's break down the CPU, the OS, the Drivers, and the Apps of the AI world.

1. The AI Model is the CPU (Raw Compute) 🧠⚡

Think of a Large Language Model (LLM)—like Claude 3.5, GPT-4, or Gemini—as the Central Processing Unit (CPU) of your computer.

A CPU is incredibly powerful. It handles complex logic, mathematical reasoning, and processes instructions at lightning speed. However, a CPU sitting on a desk by itself is useless. It has no memory of what happened yesterday, no interface to interact with the world, and no ability to save files.

An AI Model is exactly the same. It possesses raw reasoning power, but on its own, it cannot do anything outside of a chat window.

2. The AI Agent is the OS (The Orchestrator) 🖥️📂

To make a CPU useful, you install an Operating System (like Linux, macOS, or Windows). The OS acts as the brain manager. It allocates memory, schedules tasks, manages files, and creates a secure environment for things to run.

An AI Agent is the Operating System. When you build an agentic workflow, you are giving the raw AI model (the CPU) a system for memory, a framework to plan out multi-step goals, and the ability to route requests. The Agent orchestrates how a task gets done, but it still needs a way to actually execute the physical work.

3. Tools are the Drivers (Primitive Actions) 🔧💿

In a computer, the OS uses hardware drivers to perform basic physical actions, like spinning up a fan or reading a sector of a hard drive.

In AI, Tools are these primitive actions. They are the raw capabilities given to the agent, such as read_file, execute_bash_command, or query_api. They are basic, un-opinionated functions.

4. Skills are the Applications (The Playbooks) 📱📜

This brings us to the final, and most critical piece of the puzzle: Skills.

If the Agent is the OS, Skills are the software applications you install on it. In modern agentic development frameworks (like Claude Code and emerging Agent Skills open standards), it is vital to understand that a "Tool" and a "Skill" are not the same thing.

A Tool is a primitive action, but a Skill is a declarative playbook.

Often written as a simple .mdx file, a Skill provides the exact context, boundaries, and step-by-step instructions needed to solve a complex problem. When an AI Agent encounters a specific enterprise task, it loads the relevant MDX Skill file to understand exactly how to orchestrate its raw tools.

Just as you wouldn't use an OS without apps, an AI Agent without these meticulously crafted Skill files is just a chatbot trying to guess its way through your enterprise logic.

Why Skills Are the Ultimate Trust Boundary 🛡️🚧

Understanding this mental model is crucial for the #30DaysOfTrust challenge.

When you define a Skill via an MDX file, you aren't just giving the AI instructions; you are drawing a definitive boundary. You are telling the agent, "This is the exact procedure to follow, and these are the only tools you are allowed to use to do it."

In traditional computing, malware doesn't usually attack the CPU directly; it attacks vulnerable applications installed on the OS. The same is true for AI. Skills are your attack surface. They are the literal bridges between the reasoning engine and your sensitive enterprise data.

When we talk about Agent Access Security Brokers (AASB) and enforcing "Trust by Default," we are ultimately talking about governing these Skills. A secure enterprise infrastructure ensures that an AI agent cannot execute a high-stakes Skill playbook without a proxy-level broker verifying the rules, evaluating the context, and—if necessary—triggering a Human-in-the-Loop workflow.

You don't just secure the raw model; you govern the playbooks.

#30DaysOfTrust #AISecurity #AgentSecurity #AASB #Skills #AIInfrastructure #TrustByDefault #SecuriX #BuildInPublic

Spread the word

Join the Agentic Revolution.

Build secure AI agents with the first-ever Agent Access Security Broker (AASB).

Start BuildingCommunity Forum

Questions, Feedback & Discussions

Join the conversation

Recent Discussions 0 Comments

No questions yet. Be the first!