The Goldfish Problem: Building AI Agents That Remember (Without Leaking Secrets)

Discover how AI agents use semantic, episodic, and procedural memory to function in the enterprise, and the security measures needed to prevent data leaks.

Welcome to Day 25 of our #30DaysOfTrust Challenge!

An Out-of-the-box Large Language Model (LLM) is brilliant, but it has a fatal flaw: it’s a genius with a ten-second memory.

If you ask an LLM a question, it answers. If you ask a follow-up, it only understands the context because you are feeding the previous chat back to it. On its own, it has the memory of a goldfish. But for an AI Agent to be truly useful in an enterprise—to execute complex workflows, understand user intent, and act autonomously—it needs to remember.

To solve this, developers are turning to cognitive science, essentially rebuilding human memory structures in code. Let's break down the three distinct types of memory giving agents their "brain," and the massive security headache they introduce.

The Trinity of Agent Memory 🧠🔋

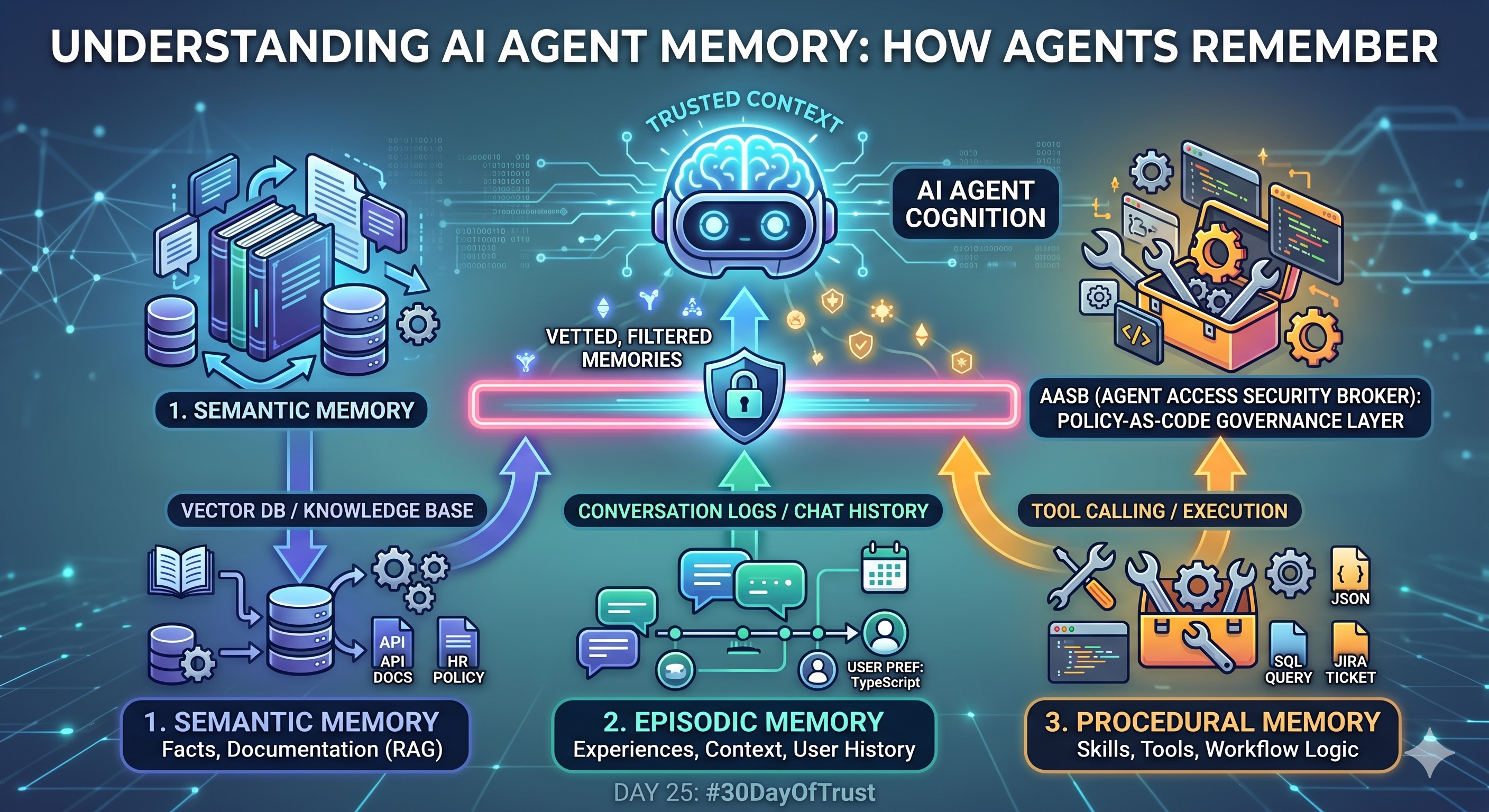

To move beyond simple chat, AI Agents rely on three distinct memory systems:

-

Semantic Memory (The Library): Think of this as the agent's factual knowledge base. It doesn’t change based on who is talking to it. In the AI world, this is powered by Retrieval-Augmented Generation (RAG) and Vector Databases. Example: An agent retrieving your company’s HR policy or API documentation to answer a technical question. It’s recalling established facts.

-

Episodic Memory (The Journal): This is the agent's memory of experiences and conversations. It’s how the agent remembers what happened 10 minutes ago, or 10 days ago, with a specific user. Example: If you tell a coding agent, "I prefer using TypeScript," it stores that in its episodic memory. The next time you ask it to write a script, it automatically uses TypeScript without you needing to remind it.

-

Procedural Memory (The Toolbox): This is the "muscle memory" or "how-to" knowledge. It’s not just knowing a fact; it’s knowing how to execute a skill. Example: In frameworks like LangGraph, this involves the agent knowing when and how to call external tools—like querying a SQL database, creating a Jira ticket, or pinging a Slack channel.

The Cognitive Science Bridge: Teaching Agents to Think 🌉🧪

If we just dump every piece of data into an agent's memory, its "brain" will get cluttered. It will become slow, expensive to run (due to massive token counts), and prone to hallucinations.

To fix this, developers are borrowing concepts from cognitive science:

- Reflection: Advanced agents are programmed to periodically pause and "reflect" on their episodic memory, summarizing long conversations into core takeaways.

- Importance Scoring: Just like you remember your wedding day better than what you had for lunch last Tuesday, agents are being taught to score memories. A user's core preference gets a high score and stays in active memory; casual pleasantries get a low score and are "forgotten" or archived.

The Trust Crisis: When Memory Becomes a Liability 🛡️⚠️

Here is where the #30DaysOfTrust comes into play. Memory makes agents powerful, but it also makes them a massive security risk.

Imagine an internal HR agent. In the morning, the CEO uses the agent to discuss sensitive salary adjustments (Episodic Memory). In the afternoon, a junior developer asks the same agent, "What is the company budget for next year?"

If the agent’s memory isn't strictly siloed and governed, it could easily hallucinate or leak the CEO’s private conversation to the junior developer.

The Solution: Memory Governance 🏛️🔒

You cannot deploy agents with long-term memory without a governance layer. This is why we need an Agent Access Security Broker (AASB).

Before an agent is allowed to retrieve a memory (whether Semantic or Episodic) or use a skill (Procedural), the request must pass through a Policy-as-Code layer (like Open Policy Agent). The broker intercepts the retrieval and asks: Does this specific user, in this specific context, have the right to access this specific memory?

If the answer is no, the memory is redacted before the LLM even sees it.

The takeaway for developers: As you build your agents, don't just focus on how much they can remember. The true test of a production-ready agent is whether you can control exactly what it is allowed to forget.

#30DaysOfTrust #AISecurity #AgentSecurity #AIMemory #CognitiveAI #AASB #SecuriX #BuildInPublic #TrustByDefault

Spread the word

Join the Agentic Revolution.

Build secure AI agents with the first-ever Agent Access Security Broker (AASB).

Start BuildingCommunity Forum

Questions, Feedback & Discussions

Join the conversation

Recent Discussions 0 Comments

No questions yet. Be the first!