Beyond the Prompt: Why Semantic Memory is the Foundation of Trust

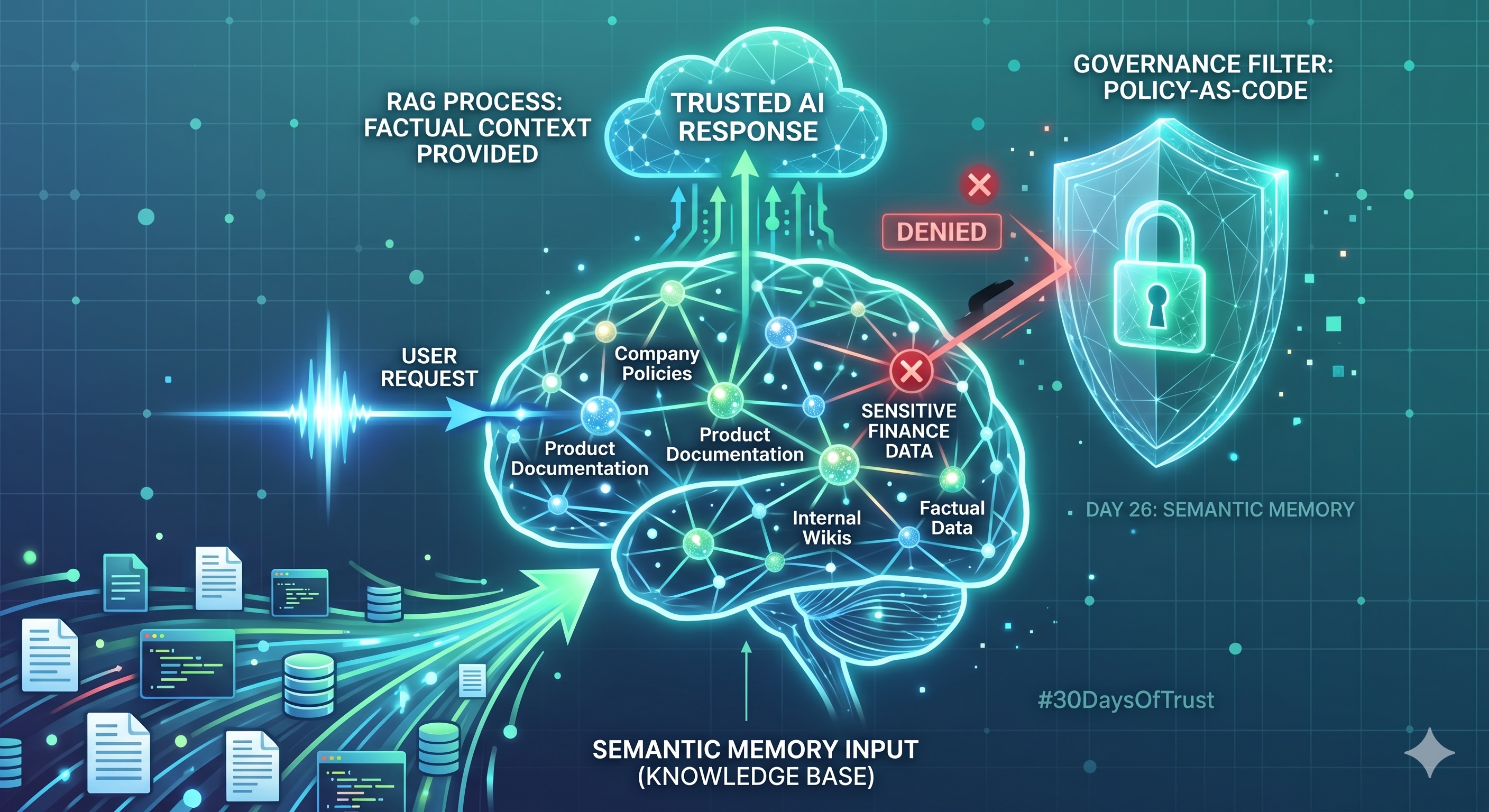

Learn how Semantic Memory and RAG form the factual foundation for AI agents, and why Policy-as-Code is essential to prevent data leaks.

Welcome to Day 26 of our #30DaysOfTrust Challenge!

If you’ve ever tried to get an LLM to answer a question about your specific company's internal documents, you’ve likely encountered its limits. Out of the box, an AI knows a lot about the world, but nothing about your world.

Semantic Memory is the solution. It is the agent's long-term, factual knowledge base. If we think of an agent as an employee, Semantic Memory is the corporate library they can access to find the right answer.

The Engine: Retrieval-Augmented Generation (RAG) 🚀📚

In the developer world, we implement Semantic Memory through a process called RAG.

- The Library (Vector Database): We take your PDFs, API docs, and spreadsheets and turn them into "embeddings"—mathematical representations of meaning. These are stored in a Vector DB (like Pinecone or Weaviate).

- The Librarian (The Search): When a user asks a question, the agent doesn't guess. It searches the Vector DB for the most relevant "facts."

- The Result: These facts are fed into the LLM as context, allowing it to provide a grounded, accurate answer based on your private data.

Why "Simple" RAG is a Security Risk 🛡️⚠️

Most founders and developers stop at the implementation phase. They build the pipeline and celebrate when the agent answers a question correctly. But in a B2B infrastructure setting, "correct" isn't enough. It must be authorized.

Imagine your agent has access to two documents:

- Public_Refund_Policy.pdf

- Internal_Financial_Projections_2027.pdf

If a customer asks about a refund, the agent should find the first document. But what if a clever user uses a "prompt injection" to trick the agent into searching for financial data? Without a security broker, the agent might blindly retrieve that sensitive data and summarize it for the unauthorized user.

The SecuriX Approach: Filtering at the Source 🏛️🔒

At Catalyst Ops, we believe that Semantic Memory must be governed by Policy-as-Code.

Before a "fact" is ever handed to the LLM, a security layer (like an Agent Access Security Broker) should intercept the retrieval. It checks the user's permissions via Open Policy Agent (OPA) and asks: "Does this user ID have the 'reader' role for the 'Financial' tag in the Vector DB?"

If the answer is no, the memory is blocked. The agent never sees it, so it can never leak it.

The Takeaway 💡

Semantic Memory makes your agent smart, but without a governance layer, it also makes your most sensitive data searchable by anyone. To build for the enterprise, you must secure the library.

#30DaysOfTrust #AISecurity #AgentSecurity #SemanticMemory #RAG #AASB #SecuriX #BuildInPublic #TrustByDefault

Spread the word

Join the Agentic Revolution.

Build secure AI agents with the first-ever Agent Access Security Broker (AASB).

Start BuildingCommunity Forum

Questions, Feedback & Discussions

Join the conversation

Recent Discussions 0 Comments

No questions yet. Be the first!