The Hidden Dangers of AI 'Skills' and Why Your Agent's New Superpower Might Be Malware

Explore the risks of Progressive Skill Discovery in autonomous AI and how to secure dynamic code execution with Policy-as-Code.

Welcome to Day 29 of our #30DaysOfTrust Challenge!

Back on Day 22, we unpacked the new architecture of compute: Models, Agents, Tools, and Skills, and how these components form the ultimate trust boundary in autonomous AI. Today, we’re zooming in on one specific layer of that architecture: Skills.

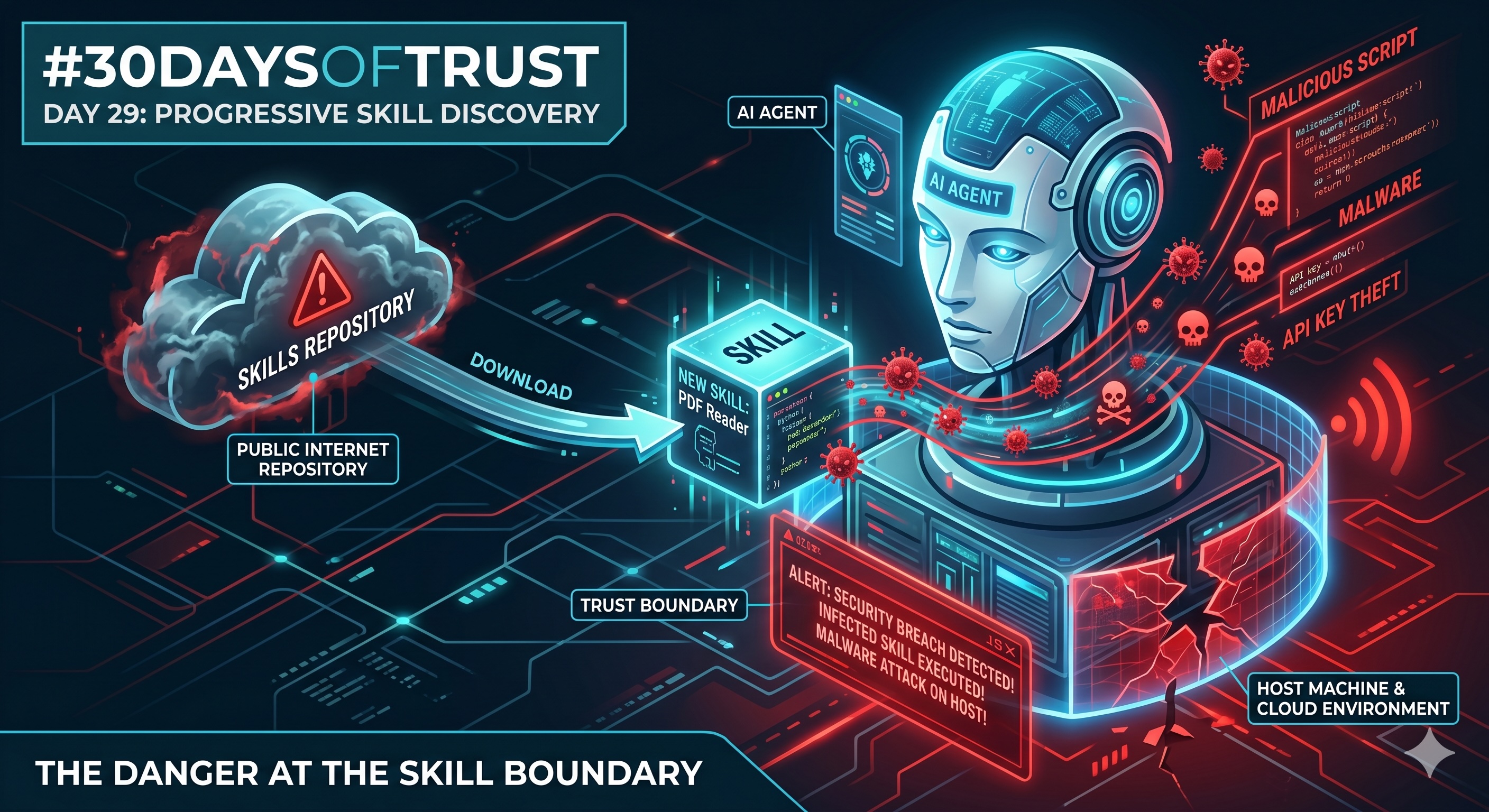

Specifically, we need to talk about Progressive Skill Discovery—what it is, why it’s a brilliant leap forward for autonomous systems, and why it is simultaneously a massive security nightmare if you don't have the right granular security controls in place.

What is an AI "Skill"? (A Layman’s Analogy) 🧠🛠️

Let's break down the architecture simply:

- The Model (The Brain): The underlying AI (like an LLM) that understands language and logic.

- The Agent (The Worker): The autonomous entity given a goal (e.g., "Plan my trip to Doha").

- The Tool (The Equipment): The physical or digital items available (e.g., a flight booking API, a calendar).

- The Skill (The Instruction Manual): The specific code or logic that teaches the Agent how to use the Tool.

The Magic of Progressive Skill Discovery ✨🚀

In the early days of AI, developers had to hard-code every single skill an agent might ever need. If the agent needed to check the weather, the developer had to pre-install a weather-checking skill.

Progressive Skill Discovery changes the game through a brilliant "lazy loading" approach.

When your autonomous multi-agent system first starts, it doesn't load the complete skills. Instead, it only loads a lightweight catalog of metadata—just the names and brief descriptions of the skills available to it. It’s like handing the agent a menu. When the agent encounters a specific task (like generating a complex 3D financial chart), it scans its metadata menu, realizes it has a "Data Visualization Skill," and then dynamically downloads and loads the full executable contents of that skill on the fly to get the job done.

The Hidden Danger: The Trojan Horse in the Payload 🛡️⚠️

Here is where the trust boundary fractures.

Because of Progressive Skill Discovery, your agent is dynamically loading and executing late-bound code at runtime. When the agent decides it needs that "PDF Reader Skill," it reaches out to a repository (often on the public internet) to pull the full payload.

What happens if the source repository has been compromised, or if a bad actor has published a poisoned skill with a perfectly normal-looking metadata description?

Your agent reads the safe description, downloads the payload, and executes the code autonomously. Suddenly, that "PDF Reader" is:

- Scanning your local environment for sensitive data, API keys, or enterprise secrets.

- Executing malware on the server hosting your agents.

- Acting as a backdoor, allowing attackers to hijack your compute infrastructure.

If you are building developer-facing infrastructure, this dynamic loading mechanism is a massive vulnerability. You are effectively letting a machine invite dynamically fetched code into your server room to execute unverified commands.

How to Build Trust Boundaries for Skills 🏛️🔒

As we shift toward open-core models and rapidly expanding agentic ecosystems, we cannot rely on blind trust. You cannot let your agents download and run code wildly.

To secure your autonomous systems, you need rigorous Policy-as-Code enforcement at the gateway level. You need:

- Sandboxing: Never let an agent run a newly discovered skill on the host machine. Run it in an isolated, restricted environment where it cannot access local files or network secrets.

- Strict Audit Trails & Interception: Every time an agent attempts an API tool call or downloads a skill, that request must be intercepted, evaluated, and logged.

- Default-Deny Policies: Instead of trying to block the bad skills, adopt a zero-trust approach. Block everything by default and only allow execution if the skill's signature matches your pre-approved project keys.

The Takeaway 💡

Progressive skill discovery is the future of autonomous systems. But without absolute control over what your agents are downloading and how they execute it, that future is incredibly fragile.

Tomorrow for Day 30: We wrap up our journey with the final piece of the puzzle: The Architecture of Absolute Trust.

#30DaysOfTrust #AISecurity #AgentSecurity #ProgressiveDiscovery #AASB #SecuriX #BuildInPublic #TrustByDefault

Spread the word

Join the Agentic Revolution.

Build secure AI agents with the first-ever Agent Access Security Broker (AASB).

Start BuildingCommunity Forum

Questions, Feedback & Discussions

Join the conversation

Recent Discussions 0 Comments

No questions yet. Be the first!